As humans, when we talk about our relationship with technology, the word ‘transactional’ mostly comes to mind. But, what if our connection to a particular device or machine was an emotional one? How would that change the way we interact with said technology and what new answers might be born from it? In partnership with our friends at Samsung, we sat down with Researcher and recent Pitch Night speaker, Mike Seymour, to explore his ideas around humanising technology and how he’s putting them into motion.

When his father suddenly suffered a stroke, Mike Seymour discovered something revelatory about our relationship as humans with current and accessible technology; the majority of devices and machinery we use lack a distinct sense of familiarity.

At the time Mike was in a predicament – while his father’s new cognitive state allowed him to still recall and relate to those he knew by face and memory, unfortunately he could no longer use a computer or learn new skills.

Mike was compelled to provide people like his father with more than a helping hand. “We highly value face to face communication. In life, we travel great distances to meet face to face. As humans – we are programmed from birth to react to faces. I want to give people like my father a friendly face to interact with when using technology,” he explained.

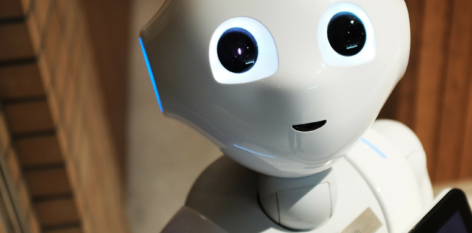

With the surge of robotic advances and AI innovation, even movies like Ex Machina, and the rapid rate at which tech is developing, Mike’s ideas around putting a face to technology doesn’t seem so out of the ordinary. But, imagine if when you spoke to your virtual assistant, they actually appeared as someone you knew; a friend, your next door neighbour or family member.

Having spent several years in the visual effects area of the entertainment industry, in Research and Development and in film production, this link between technology and a familiar face was obvious to Mike.

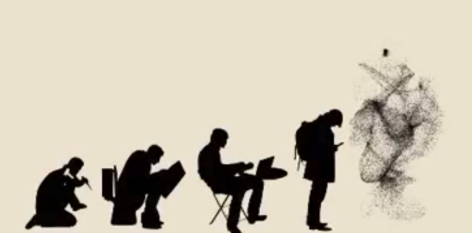

“Currently, the way we interact with our machines isn’t a reflection of how we interact with each other. We find our way around our computers by poking the screen with a finger or via a mouse – it is humourless, blank and submissive. While there are many applications where this is fine, it is hard to believe that there are not also cases where an emotional exchange with a computer would not be a richer more meaningful experience.”

Mike and his team are currently exploring both digital humans as separate ‘agents’ and as digital puppets or avatars. His most powerful revelation and the day responsible for putting his idea into first gear, was when he observed a friend doing work with a digital human.

“The reaction of being there in the room was very different from just seeing it on video. I knew that actually interacting with these Digital Humans did something special and created a connection. It is as if the actual interaction, live – in real time – changed the experience. From there, I knew I had to find out more.”

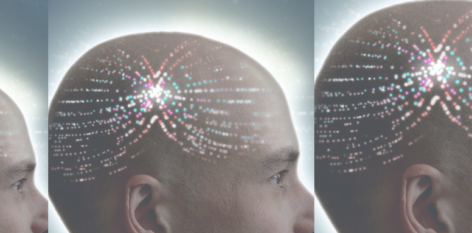

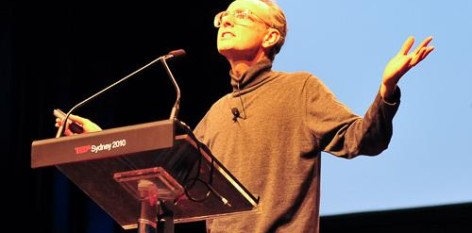

But, what makes this different from existing robotics and are there any cautionary tales to be told? As he said in his recent TEDxSydney talk, “This is all becoming possible due to a perfect storm of Faster GPU graphics cards, New Machine Learning AI technology and incredible advances in game engines. But what makes the technology interesting is that the imagery can be generated in real time. This is a really key point. The faces we are exploring can talk to you, interact and ‘see you’.”

Mike raises the point that it’s important to think carefully about how we map out the future of this technology and who we choose to ‘represent us’.

“More than just looking at the enormous advances in technology, we want to think about some of the ethical issues. Who we choose to digitally represent us, to ‘serve’ us, really matters. And, it really changes what it means to be a ‘user‘. Will Digital Makeup reinforce self-image problems for some people? And where is trust in all of this, if anyone can be anyone and say anything? There are so many issues around trust, values and identity – we have a lot of open spaces to explore.”

It seems natural then, that when Mike’s idea of ‘technology with a familiar face’ comes to fruition, it will first appear in use on our phones. But how will that work and why isn’t it happening already for people like Mike’s father?

“It doesn’t make sense that the screen is blank when you ask your voice assistant for advice or ask it a question. We can do better. Your phone is really just your computer today and hardly ever a ‘phone’. Why not use this technology to allow for a richer and more rewarding experience. Don’t we want our mobile to get to know us, get more familiar with us, even just a little bit?

Mike Seymour holds a Masters and a Bachelor of Science focused on CGI and Pure Maths from the University of Sydney, Mike currently has a PhD Fellowship researching interactive real-time photoreal faces in new forms of Human-Computer Interfaces.